Most brands treating AI content for SEO as a volume play are about to lose everything they built. The strategy works right up until Google tweaks its algorithm, and then the traffic collapse is vertical. Entire content libraries go from driving six-figure monthly traffic to a rounding error inside a quarter.

One site published 6 million AI-generated articles. They peaked at 740,000 indexed pages. They are currently sitting at 422,000 and dropping every month. The crash is not a theory. It is already happening.

If you want the frameworks I use to separate AI content that compounds from AI content that collapses, I share them inside the free AI SEO Operators community. Come say hi.

What AI Content for SEO Actually Is

AI content for SEO is content produced wholly or partly by large language models and published with the intent of ranking on Google. That covers everything from a ChatGPT-drafted blog post with light human editing to fully automated mass publishing pipelines pushing thousands of pages a day.

The category gets conflated in public conversation. Using AI to help a human write a page is not the same as using AI to generate pages faster than any human could review them. Google does not see these the same way either, and neither should you. The difference between the two is the difference between compounding and collapsing.

Does AI Content Hurt Your SEO Rankings

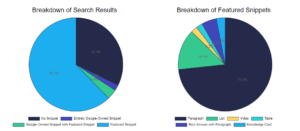

No. Google does not penalize content because it was produced by AI. An Ahrefs study of 600,000 web pages found the correlation between AI content percentage and ranking position was 0.011, which is effectively zero. Google’s own public stance is that the method of production is not what gets penalized. Intent to manipulate rankings is.

What does get penalized is low information gain. If your page covers the same ground as hundreds of existing pages without adding anything original, Google has no reason to index it, let alone rank it. AI makes it trivially easy to produce that kind of page at scale, which is why mass AI content strategies look like the problem even when the real problem is the underlying content being redundant.

The distinction matters because it tells you exactly where the line is. AI-assisted content that adds real information, experience, or data can rank. AI-generated content that summarizes what already ranks cannot.

Why Mass AI Content Strategies Collapse

Mass AI content strategies collapse because they rely on the algorithm continuing to reward the specific ranking pattern that made them work in the first place. When Google tweaks the algorithm to favor a different type of site for a given query type, the entire strategy evaporates overnight.

The dangerous part is the feedback loop during the good times. As the content library grows, traffic grows. The data tells the team that publishing more pages produces more traffic. Every internal dashboard confirms the strategy. The business forecasts more hires, more tools, more volume. Nobody sees the tipping point coming, because there is no data to show it until it arrives.

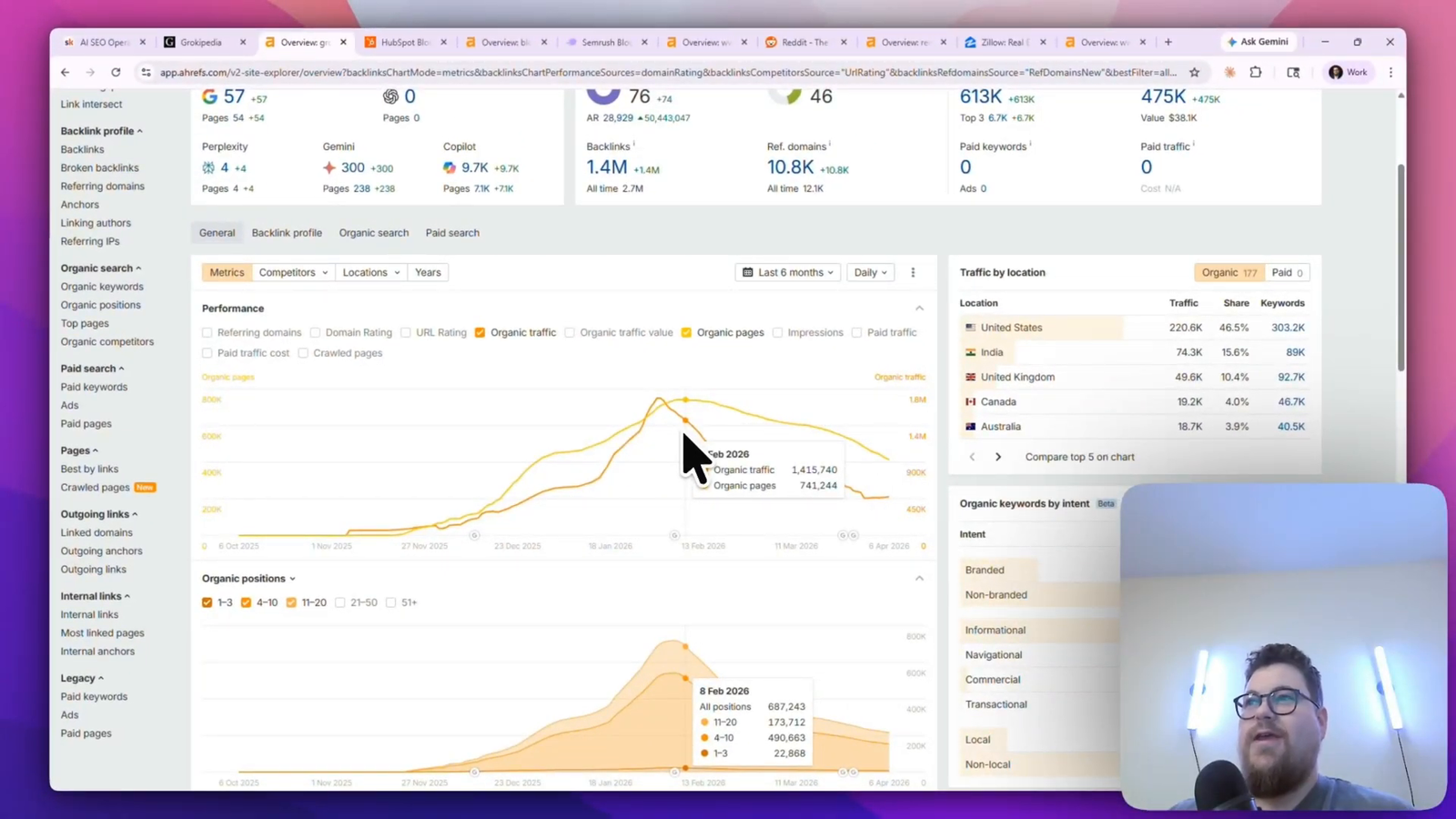

The Grokipedia case study

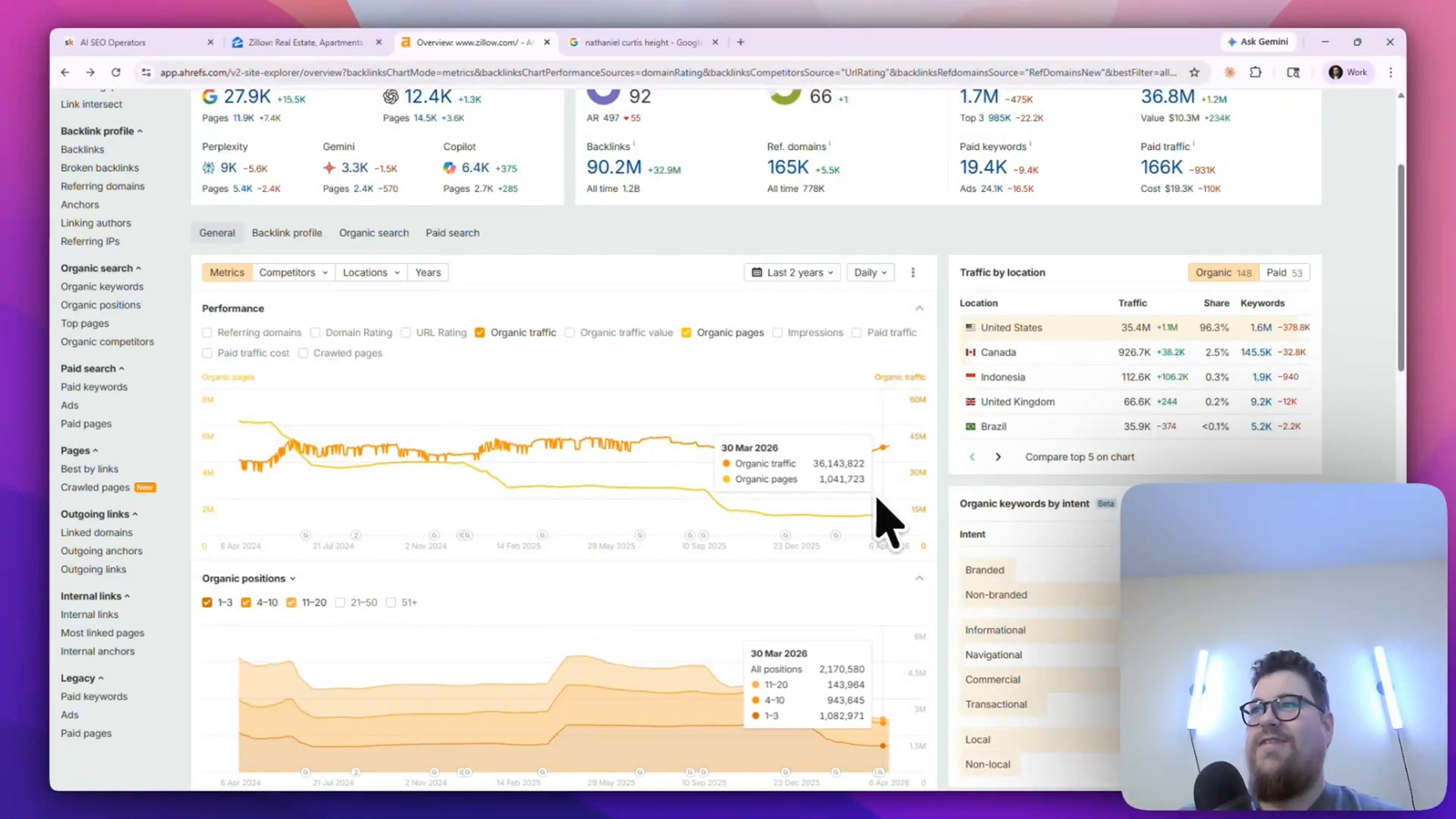

Grokipedia is an AI-generated version of Wikipedia. They published over 6 million articles, and their indexed page count peaked at around 740,000. Today they are at roughly 422,000 indexed pages and dropping.

The collapse was not caused by a single update. It took Google a handful of algorithmic tweaks to favor different sites for the query types Grokipedia was ranking for. When I audited the pages losing the most traffic, the pattern was immediate. Most of them were about people. One page about Nathaniel Curtis only ever ranked for a single long-tail query: “Nathaniel Curtis height.” Same for Jessica Darrow. One lucky keyword per page, multiplied across thousands of pages. That was the entire growth engine.

When Google decided that for people-related queries it preferred authoritative sources like IMDB, Hamilton Hodel, and Wikipedia itself, every one of those single-keyword wins disappeared at the same time. The strategy never had resilience. It had volume masking fragility.

The Traffic Graveyard: More Case Studies

The collapse pattern is not limited to one site or one strategy. Countless brands running different versions of “more pages equals more traffic” have now lost the majority of their organic traffic in under two years.

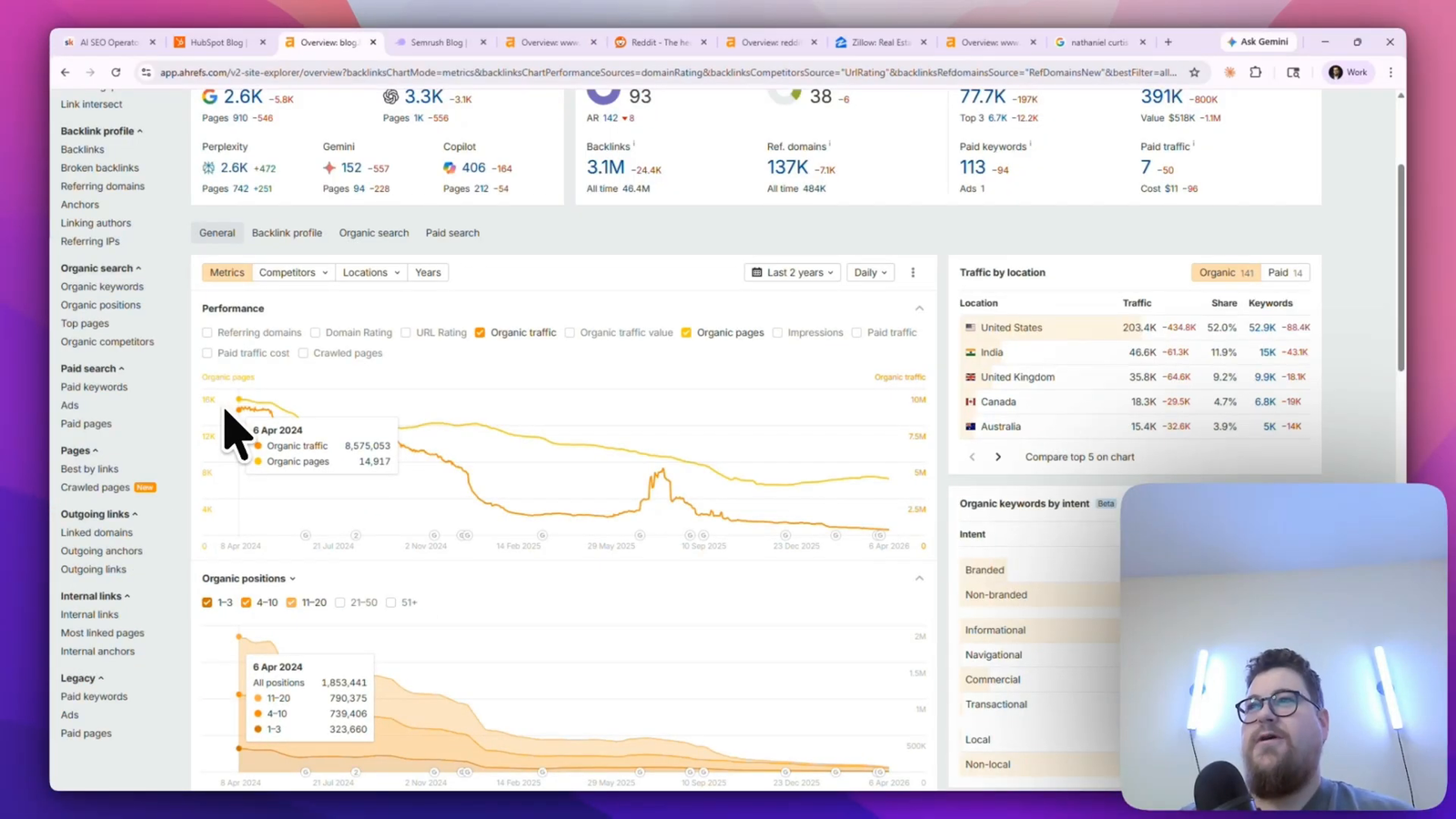

HubSpot’s blog: from 8M to 391K

HubSpot was the dominant content marketing brand for more than a decade. Their blog organic traffic was around 8 million visitors a month two years ago. It is now at 391,000. There is no sign the decline is slowing.

The pages that lost the most traffic tell the story clearly. Inspirational quotes. Resignation letters. Cover letter examples. Shrug emoji. What is your greatest weakness. Color theory design. HubSpot was a marketing software company publishing pages about literally anything that might drive traffic. Across multiple algorithm updates, Google shifted to favor sites that stay in their lane. HubSpot’s lane got redefined for them.

HubSpot knows this. They are actively killing pages that no longer fit the brand and keeping pages that do. They gave up on the resignation letter page and let indeed.com take it. The issue is that the damage to AI visibility is harder to reverse. Their AI Overview coverage peaked and has since dropped to around 2,000, which is extraordinarily low for a site of their authority.

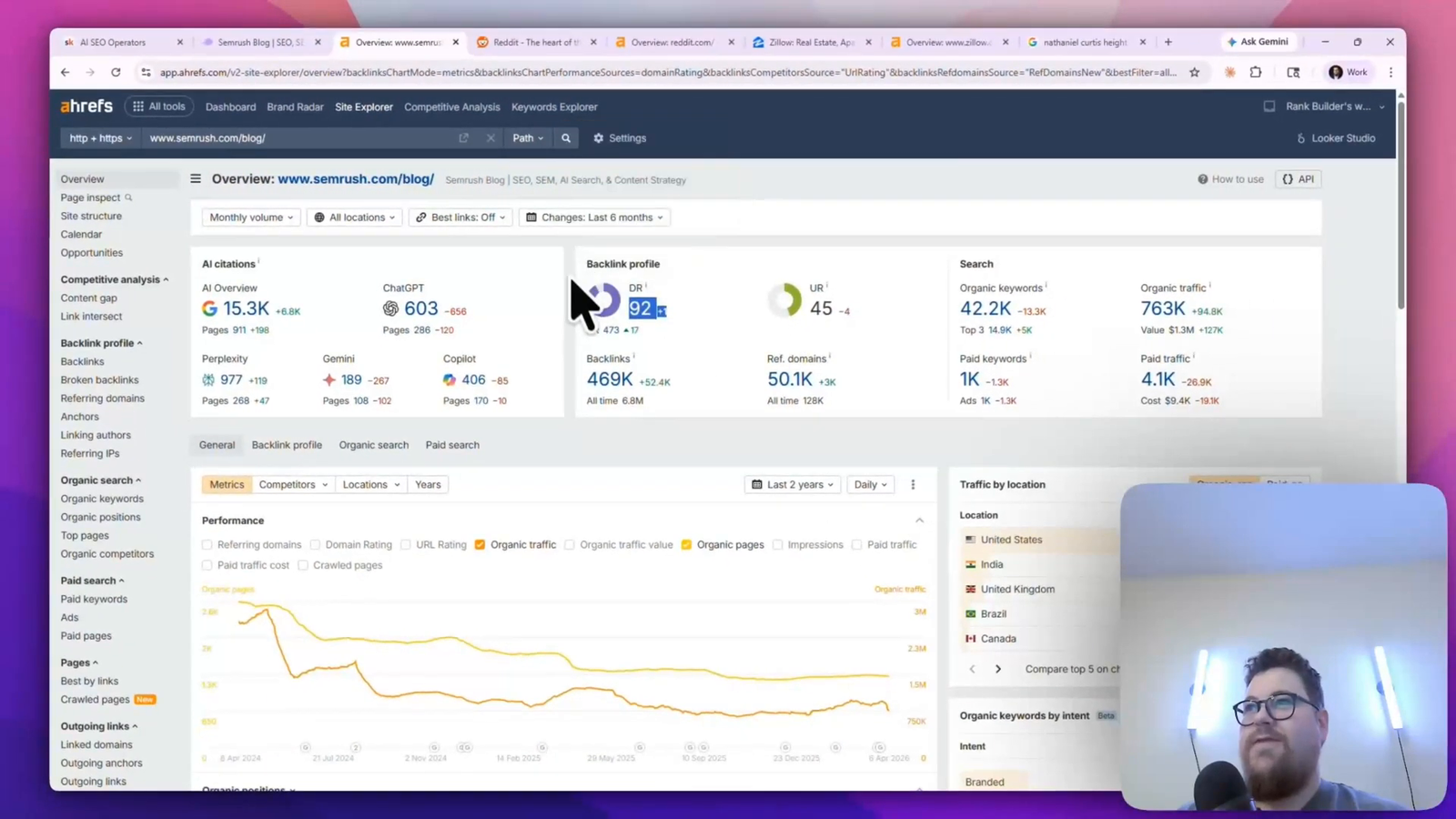

SEMrush’s glossary problem

SEMrush’s blog used to drive over 2 million monthly organic visits. It is currently at 763,000 and declining. Authority is not the problem. Their domain rating and backlink profile are both strong.

The pages losing the most traffic were glossary-style pages. What is SEO. What is Bing AI. What is keyword research. Affordable SEO. SEMrush built a giant definitional library during a period when that was how people searched for the meaning of a term. The behavior shifted. Today, if someone wants to know what SEO is, they ask ChatGPT or Gemini and get a synthesized answer that does not require reading anything. The page that used to capture that query has nothing to capture anymore.

The pattern across all three

Every site losing this way was offering something AI can now do directly. Answering questions. Defining things. Summarizing topics. When the behavior shifted and the algorithm shifted with it, the entire category of content got deprioritized at once. The sites that built their strategy on top of that category had no second layer to fall back on.

Why Some Sites Still Win With Massive Page Counts

Not every site with millions of pages is collapsing. Two are growing while their peers deflate. The difference is what they offer Google that AI cannot.

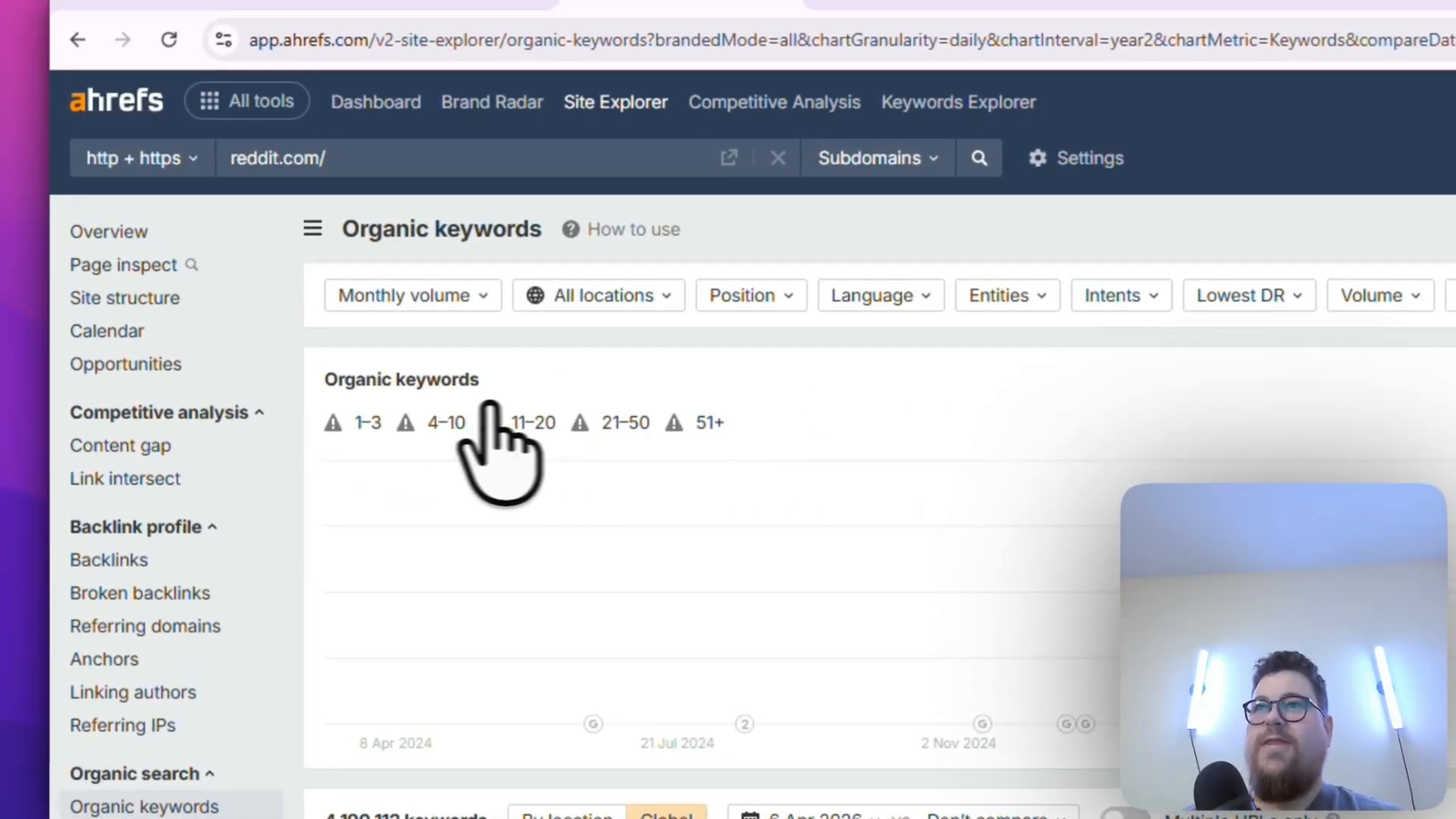

Reddit’s unique data advantage

Reddit is sitting at nearly a billion monthly organic visits across 55 million pages. They have shed a significant portion of their indexed pages recently, and their traffic has kept climbing. They rank for over 4 million AI Overviews globally, enough that the chart does not even load properly.

There is a fair argument that Google gives Reddit preferential treatment through explicit deals. That argument is true and incomplete. The deeper reason Reddit wins is that they produce something Google structurally needs: user-generated content on every topic imaginable. AI cannot generate a real human’s lived opinion on a niche product, a real thread of a community debating an idea, or a real answer from someone who has actually done the thing. Reddit has that at a scale no other site does. Google needs it for answer quality. The traffic follows.

Zillow’s programmatic SEO done right

Zillow has around 36 million monthly organic visits and just under a million pages. Two years ago they had 6 million pages and roughly the same traffic. They deliberately shrunk their index while holding the traffic line.

Zillow’s programmatic SEO strategy is one of the best examples of the model executed correctly. Every page they publish is built on unique data. Real listings. Real prices. Real geographic specificity. An AI chatbot cannot give you the current asking price on 247 Maple Street because that data lives inside Zillow’s database. They also route their authority intelligently. Their “houses for sale near me” category page is the second-highest traffic page on the entire site after the homepage, and they flow links to it from every surrounding page on the network.

The lesson inside Zillow’s model: programmatic SEO at scale still works, but only when each page offers something the indexed web does not already have.

The Three Conditions AI Content for SEO Must Meet

Every AI content strategy that compounds past an algorithm update meets at least one of three conditions.

The first is unique data. Information that is not freely available anywhere else on the web and that users genuinely search for. Zillow’s model. Real estate pricing data. Proprietary analytics. Industry-specific metrics you collect and publish. If the data is exclusive, the page is hard to replace.

The second is content Google structurally needs. User-generated content is the clearest example. First-hand experience, real product reviews, authentic community discussions. Google has publicly signaled that first-hand experience is part of their quality evaluation, and UGC is the most scalable source of it. If your site produces this natively, you have an asset that AI cannot replicate.

The third is radical topical focus. Staying tightly inside the lane your brand actually belongs to and refusing to publish content that does not fit. HubSpot’s current pivot. You lose the ability to chase any keyword that looks interesting, and you gain the ability to be the authoritative source for the keywords that actually match your business.

If your AI content strategy does not satisfy at least one of these three, the strategy is borrowing time from the algorithm. It will work until it does not.

The Stay-in-Your-Lane Test

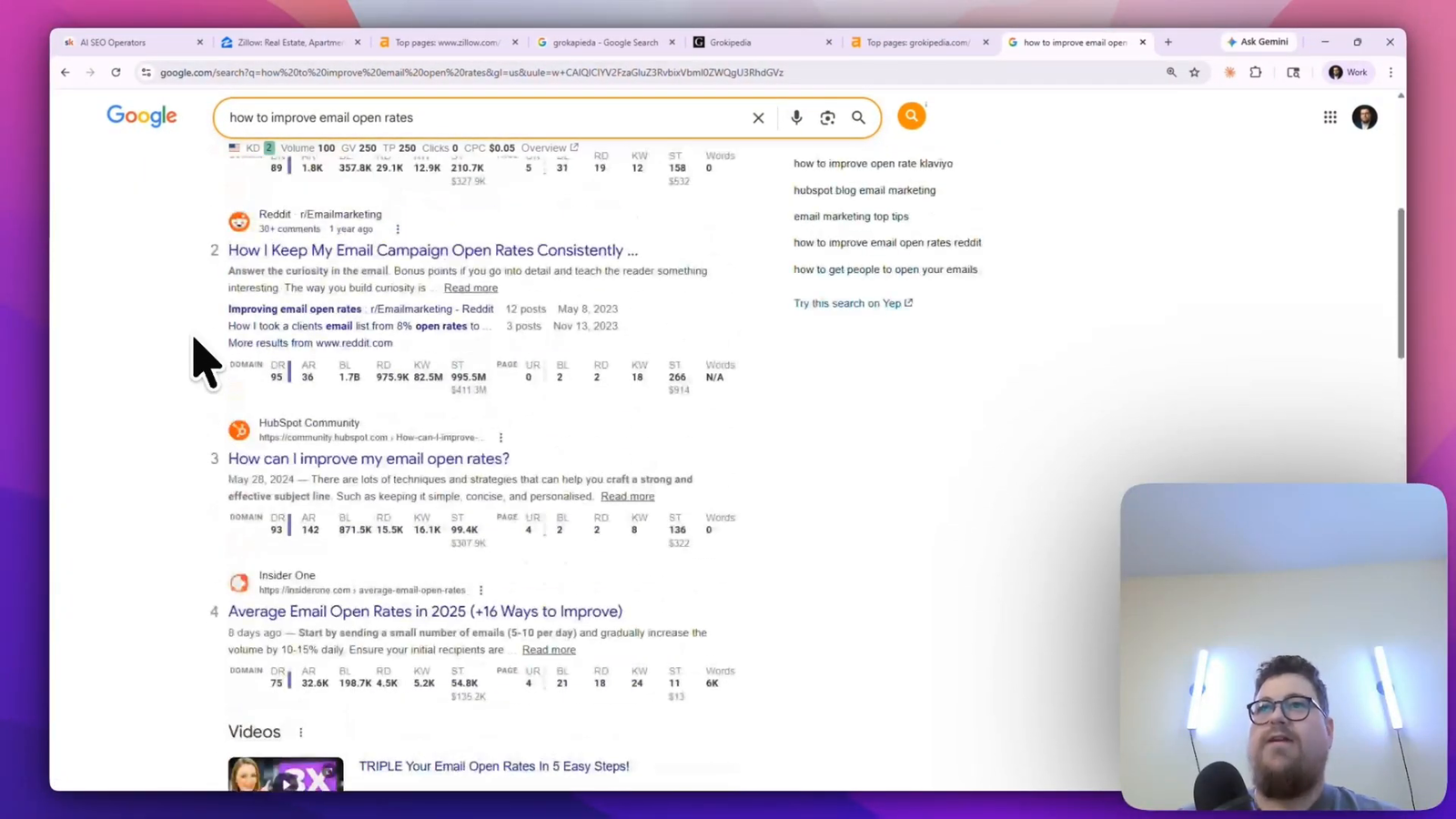

There is a simple test for whether a topic is inside your lane. Google the keyword. Look at who is ranking.

If you are an invoicing software company considering a post on “how to improve email open rates,” run the search. The first page is dominated by Campaign Monitor, HubSpot, Reddit, and other email and marketing-focused sites. You have to go to page four before finding anything close to invoicing software, and even then it is a community page from Square that drives no traffic. The keyword is not in your lane. Making that page is a guaranteed time sink.

Flip it. If you are the same invoicing software and you look at “how to write an invoice email,” the first page is GoCardless, Stripe, and other billing-focused brands. Now you are in the right neighborhood. Similar sites are already ranking, which means Google has decided this query type belongs to sites like yours.

The test works because Google’s results page is a live snapshot of which brand category Google believes the query belongs to. If your brand category is absent from the top 30, you are not going to win the keyword, and you should not waste AI content production capacity trying.

Answer Engine Optimization as a Quick Fix

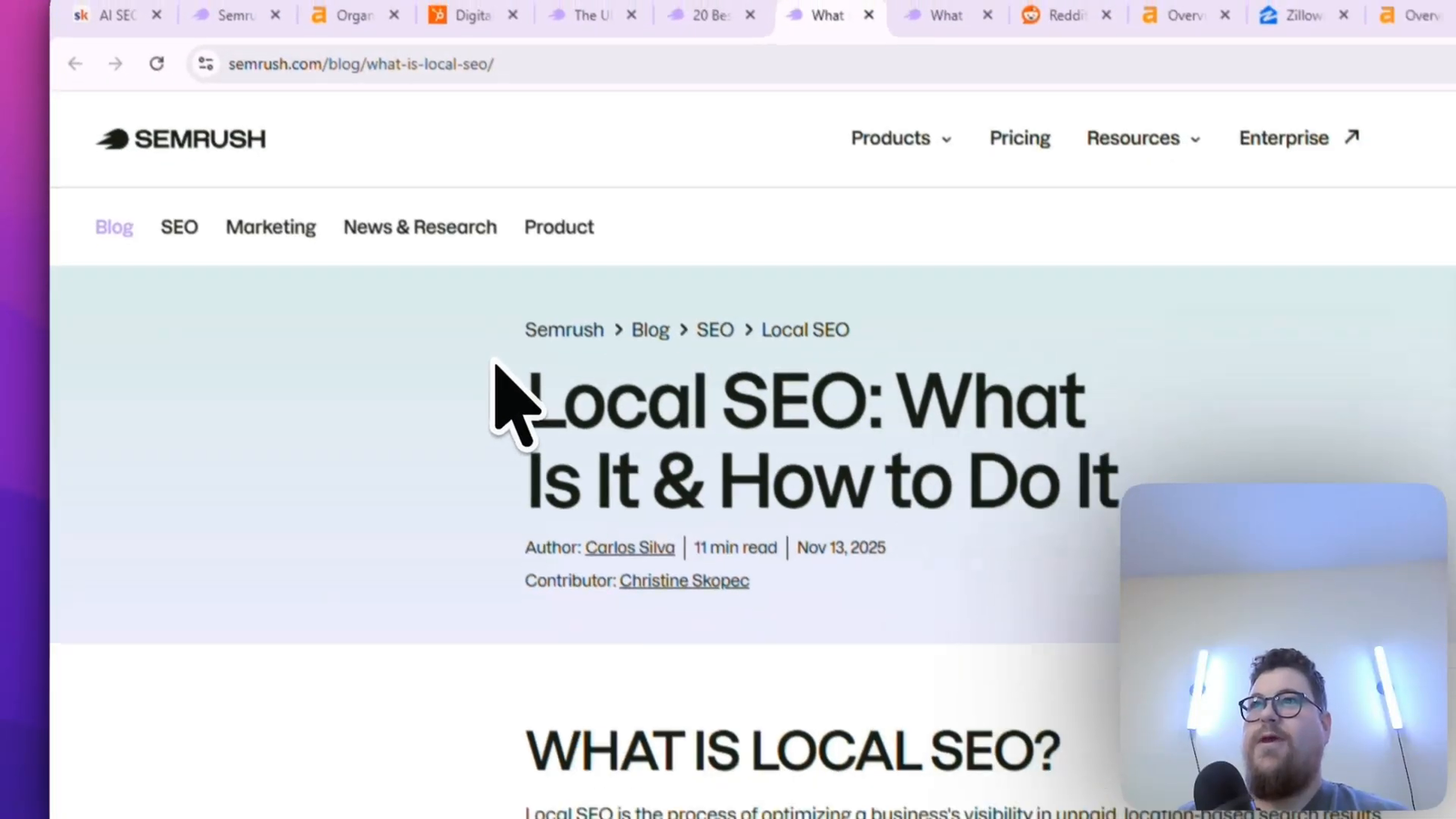

SEMrush is losing organic traffic but winning AI Overview coverage. Two years ago they had zero. Today they have over 10,000. The difference is structural.

If you look at SEMrush’s glossary pages, almost all of them lead with the definition. The “what is local SEO” page opens with one sentence: local SEO is the process of optimizing a business’s visibility in unpaid location-based search results. No introduction. No table of contents. No “in this guide we will cover” boilerplate. The answer is the first thing on the page.

HubSpot’s “what is digital marketing” page does the opposite. It opens with an introduction, a scrolling table of contents, and several hundred words of preamble before it actually defines digital marketing. That is the difference in AI Overview coverage explained in a single page layout choice.

Stripping the intro and leading with the direct answer is the single most effective quick fix for AI visibility on an existing page. It does not save a collapsing traffic strategy, but it does buy AI citation coverage that would otherwise go to a cleaner-structured competitor. Do this across your top pages before you do anything else.

How AI Content for SEO Fits Into the Bigger Picture

AI content for SEO is one layer of a larger discipline. AI SEO as a field is the convergence of traditional SEO, generative engine optimization, answer engine optimization, and agentic automation. Content production is where most teams start, and it is also where most teams confuse motion for progress.

The sites winning are treating content as one output of a system that also handles topical authority, on-page structure for AI retrieval, citation-worthy data assets, and interlinking that flows authority to the pages that drive revenue. The sites losing are treating AI content as a volume dial they can turn up indefinitely. The two models look similar from the outside and produce completely different outcomes over 18 months.

Frequently Asked Questions About AI Content for SEO

Can Google detect AI-generated content?

Google does not confirm that it uses an AI detector, and the company has publicly said that the method of production is not the penalty vector. What Google can detect reliably is low information gain, thin content, and pages that add nothing to the existing index. If your AI content triggers those signals, the method is irrelevant.

How much AI-assisted content is safe to publish?

The safe upper bound is not a percentage. It is a test. Can each page stand on its own as an original contribution to the index, meaning a piece of content that a reader could not find in the first five results already ranking? If yes, you are clear regardless of how much AI was involved in the draft. If no, you are at risk regardless of how small a percentage the AI contribution was.

Can rank AI content on the first page of Google?

Yes. The same Ahrefs study of 600,000 pages found AI-generated or AI-assisted content across the top 20 ranking pages for the majority of queries tested. Ranking is possible. The constraint is originality, not origin.

How long it takes for a mass AI content strategy to collapse?

The lag between peak performance and collapse in the case studies covered in this post ranged from two to twenty-four months. Edia collapsed inside a year. HubSpot took closer to two years. The variable is how quickly Google refines the query types your content was ranking for. There is no way to predict the tipping point from inside the growth curve.

Should I kill existing AI-generated pages or refresh them?

Audit them against the three conditions. If a page satisfies unique data, user-generated content, or tight topical fit, keep and refresh it. If it satisfies none, kill it. Pages that only ranked because the algorithm briefly rewarded them are liabilities once the algorithm shifts, because they absorb crawl budget and dilute topical focus without contributing traffic.

Should I use AI for content strategy or just content production?

AI is better used for strategy work than for production work. Keyword research, query clustering, topical gap analysis, content brief generation, and internal link mapping are all high-leverage applications. Using AI to draft the page itself is the lowest-value use and the one most likely to produce the redundancy that triggers information-gain problems.

Join the Free AI SEO Operators Community

If this framing clicked, the actual systems and validation frameworks behind it live inside the community. Come join the AI SEO Operators community on Skool. It is free, no catch.

I also share a free Claude skill inside the community that acts as a content validation checklist, so you can run any AI content idea through it before you commit production time to the page. The operators showing up consistently are the ones building AI content strategies that compound past algorithm updates. Pull up a chair.